🏭 The Week Google Fired Back

Field notes from the AI trenches—what actually matters this week

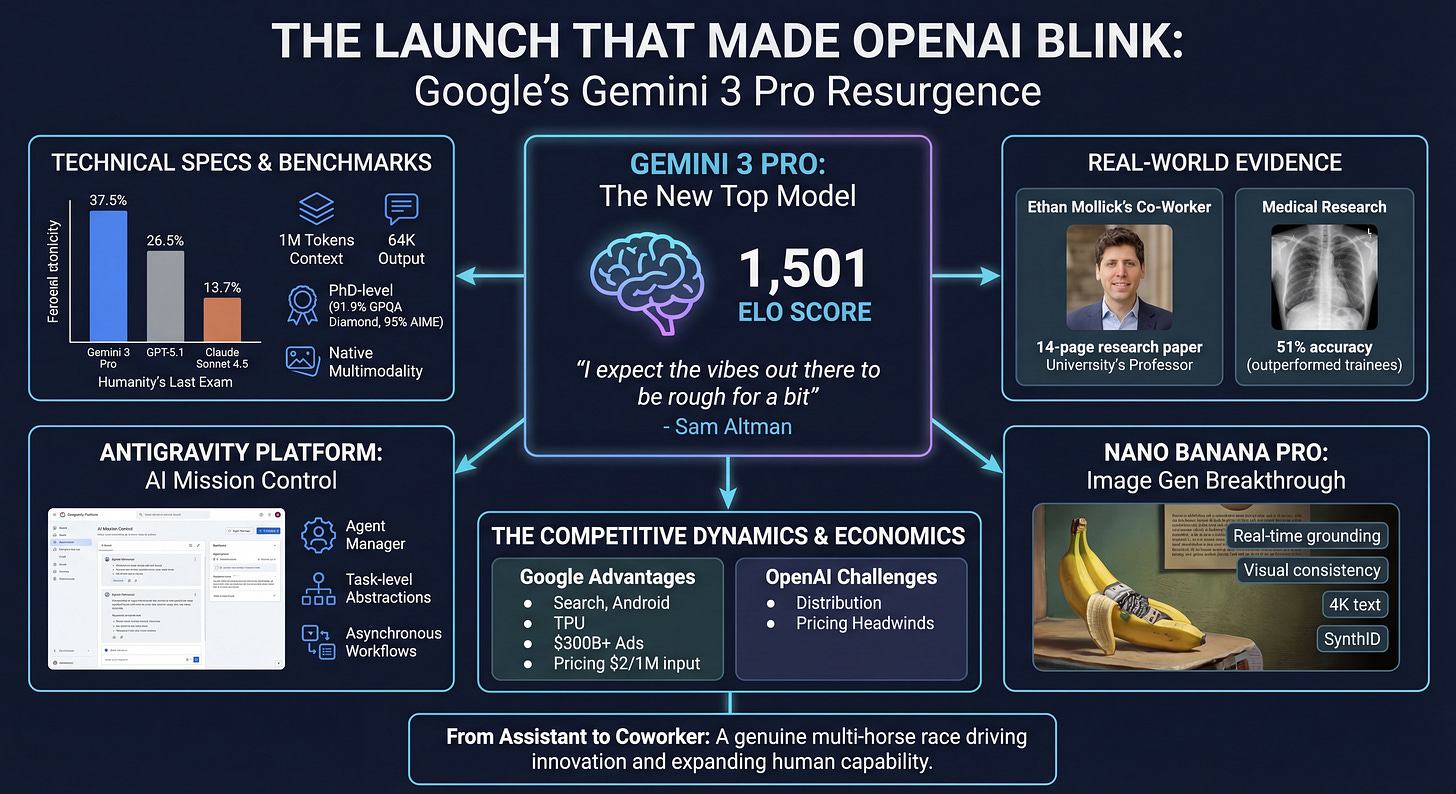

This week’s The Prompt Factory is longer than usual, giving us the opportunity to do a deeper dive into the Google announcements—Gemini Pro 3, Nano Banana Pro, Antigravity—we hope you enjoy this extended coverage on these significant releases.

Three years ago, we marvelled at AI writing poems about otters. This week, an AI agent built its own research environment, formulated original hypotheses, and debated statistical methodology with a Wharton professor. The gap between those moments? Less than 1,000 days.

AI is in the process of graduating from party trick to coworker.

Google’s Gemini 3 Pro launch didn’t just move benchmarks, it moved the competitive landscape. OpenAI’s Sam Altman sent an internal memo warning of “rough vibes ahead”. Amongst other news, independent researchers watched general purpose AI diagnose medical scans better than radiology trainees and non-technical founders are winning government contracts with vibe-coded solutions.

Meanwhile, China’s open-source models are quietly capturing 80% of startup adoption and a new “AI Scientist” can do six months of research work in a day.

Let’s unpack one of the biggest weeks in AI.

FEATURE: Google Fires Back—The Gemini 3 Era and What It Means

💣 The Launch That Made OpenAI Blink

Google unveiled Gemini 3 Pro this week, and for the first time since ChatGPT’s launch in November 2022, OpenAI publicly acknowledged competitive pressure. CEO Sam Altman sent an internal memo to staff: “I expect the vibes out there to be rough for a bit”.

What happened

Gemini 3 Pro topped the LMArena Leaderboard with a breakthrough Elo score of 1,501, immediately establishing itself as the most capable publicly available AI model. The rollout was simultaneous across Google Search (a first for any day-one model), the Gemini app (650 million monthly users), and a new development platform called Antigravity. Within hours, the AI community was calling it a potential inflection point.

What it does

The technical specs tell the capability story:

Processes 1 million input tokens across text, images, video, audio, and PDFs

Generates up to 64,000 tokens of output

Crushes benchmarks: 37.5% on Humanity’s Last Exam (versus GPT-5.1’s 26.5% and Claude Sonnet 4.5’s 13.7%)

PhD-level performance: 91.9% on GPQA Diamond scientific knowledge, 95% on AIME 2025 mathematics

Agentic coding: 76.2% on SWE-Bench Verified—good, but only competitive (not leaping ahead)

Native multimodality: understands and reasons across all input types simultaneously

“Deep Think” mode for complex problems that require extended reasoning time

But here’s what matters more than benchmarks: real-world deployment evidence.

Professor Ethan Mollick at Wharton gave Gemini 3 decade-old crowdfunding research data in corrupted file formats. The AI didn’t just recover the data—it formulated original research hypotheses, created novel statistical measures using natural language processing (metrics Mollick hadn’t suggested), conducted sophisticated analysis, and produced a 14-page research paper with original insights.

“I am debating statistical methodology with an agent that built its own research environment,” Mollick wrote. “The era of the chatbot is turning into the era of the digital coworker”.

Independent medical research validated this leap. Testing on 50 complex diagnostic radiology cases, Gemini 3 Pro achieved 51% accuracy—the first generalist AI model to outperform radiology trainees (45%), though still below board-certified radiologists (83%). Previous frontier models all performed below trainee level: GPT-5 managed only 30%. Remember, this is a generalist model, not one trained specifically for the task.

The Antigravity platform: Mission control for AI agents

Google didn’t just ship a better model—they reimagined the development experience.

Antigravity is an agentic development platform that moves beyond the “AI assistant embedded in your IDE” paradigm like other coding agents.

Instead, Antigravity works across three places:

Code Editor: For writing and editing code.

Terminal: For installing dependencies, running build commands and debugging tests.

Browser: Running your application, navigating screens and visually verifying that things work.

Instead of manually running the steps to fix a bug, you can now ask: “Fix the layout bug on the mobile page, run a test and ensure the change doesn’t break the desktop version.” The agent takes over, edits files, runs the server, drives the integrated browser to perform the tests and verifies the result.

The economics are striking: free access with generous rate limits on Gemini 3 Pro that refresh every five hours.

Image generation breakthrough: Nano Banana Pro

Complementing the core release, Google DeepMind introduced Nano Banana Pro (marketed as Gemini 3 Pro Image), a state-of-the-art image generation model that finally solves one of AI’s persistent challenges: accurate, legible text directly in images across multiple languages.

Distinctive features:

Real-time information grounding via Google Search (current weather, sports scores, recipe details, etc)

Visual consistency across up to 14 images and 5 people in complex compositions

Native 4K text rendering with sharp, legible typography

Day-to-night transitions, bokeh effects, camera angle adjustments—all in natural language

SynthID digital watermark for transparency

The model is available across consumer (Gemini app), professional (Google Ads, Slides, Vids), developer (API, AI Studio, Antigravity), and creative (Flow filmmaking tool) audiences.

I copied the text from this newsletter into Google AI Studio and asked it to create an info-graphic—this is what it produced. No special prompt engineering, just a very simple one-shot prompt. Not only did it correctly (as far as I can see) summarise a lot of information, but it did an excellent job of rendering text—something AI has struggled with until now.

Amusingly, it pictures Sam Altman as Ethan Mollick’s AI co-worker, which is quite a creative leap. I assume the image came from Gemini’s web grounding feature that allows it to (optionally) do web searches to find additional data. I’d say this result is stunning.

My guess is that a lot of dry corporate presentations are about to be enlivened with professional-looking charts and info-graphics.

NotebookLM not being left out

Oh, and not to be outdone, Google’s NotebookLM now uses Nana Banana Pro to generate info-graphics from your notebooks. The rollout is to paid users now and free users in the coming weeks—so anyone will be able to generate info-graphics without paying.

The competitive response

The Information reported that Sam Altman’s internal memo acknowledged Google’s resurgence could create “temporary economic headwinds” for OpenAI. “We know we have some work to do but we are catching up fast,” Altman wrote, conceding Gemini 3 was receiving “stellar reception from AI leaderboards, analysts, and consumers”.

When ChatGPT launched, it sent shockwaves through Google—who were authors of the original “Attention is all you need” paper on which ChatGPT’s technology is based.

Now Google is on the offence and it possesses some structural advantages:

Integration across massive existing products: Search (90%+ market share), Gmail, YouTube, Maps, Android

Custom TPU hardware and massive infrastructure economies of scale

Ability to subsidise AI access through $300+ billion in annual advertising revenue

The pricing weapon

Gemini 3 Pro’s pricing aggressively undercuts competitors: $2.00 per million input tokens and $12.00 per million output tokens (under 200K token contexts). That’s approximately 33% below Claude Sonnet 4.5’s $3.00/$15.00 pricing while outperforming on many benchmarks.

Combined with generous free tier access through Antigravity and the Gemini app, Google has eliminated cost as a barrier for individual developers and small teams.

From assistant to coworker: What this actually means

The real story isn’t the benchmarks—it’s the qualitative shift in what AI can do autonomously.

Mollick’s framing captures it: we’re moving from “human who fixes AI mistakes” to “human who directs AI work.” The model demonstrates PhD-level intelligence with graduate-student-like limitations. Statistical approaches sometimes need refinement, theorising occasionally exceeds evidence, and human oversight remains essential. But the errors have shifted—they’re arguably now more human-like rather than obvious hallucinations. Does that make them less of a problem, or more difficult to spot?

Technology writer Simon Willison’s testing revealed both capabilities and limitations. Image transcription proved highly accurate, capturing all figures from complex benchmark tables. But audio transcription timestamps were inaccurate, not aligning with actual video timing.

Limitations remain, but the trajectory is unmistakable.

The stakes: A genuine multi-horse race

For three years, many assumed OpenAI had won the AI race with ChatGPT’s launch. Gemini 3 Pro proves that assumption premature.

The “rough vibes” Altman predicted may prove more than temporary if Google successfully leverages its advantages in infrastructure, distribution, and integration. This is now a genuine competition where ecosystem integration and pricing matter as much as raw capability.

For developers, researchers, and businesses, this is unambiguously positive. Competition drives innovation, lowers prices, and expands access. The fact that a non-technical founder can now win government contracts using AI coding tools, or that a professor can debate statistical methodology with an autonomous agent—these represent not incremental improvements, but fundamental expansions of what individual humans can achieve.

In Other News…

🚀 OLMo 3: The Most Open AI Release Ever

The Allen Institute for AI just raised the bar for AI transparency with something no one else offers: the complete recipe.

What happened: OLMo 3 provides not just model weights but the entire “model flow”—every stage, checkpoint, dataset, and dependency required to create and modify the model from scratch.

What it does:

OLMo 3-Think (32B): Best fully open “thinking” model, lets you inspect reasoning traces in real-time

OLMo 3-Instruct (7B): Matches or beats Qwen 2.5, Gemma 3, Llama 3.1 at similar scale

OLmoTrace integration: Trace outputs back to training data in real-time

Why you should care: “True openness in AI isn’t just about access—it’s about trust, accountability, and shared progress”, the announcement states. When frontier models make mistakes, you can’t audit them. When OLMo makes mistakes, you can trace exactly which training data influenced the output.

The stakes: OLMo represents a possible pathway to genuinely auditable AI.

💻 GPT-5.1-Codex-Max: The 24-Hour Coding Sprint

OpenAI released something genuinely new: an AI that works independently for 24+ hours on complex coding tasks.

What happened: GPT-5.1-Codex-Max introduces “compaction” technology—the model prunes context history while preserving what matters, giving itself fresh context windows to continue work across millions of tokens.

What it does:

Works on project-scale refactors and debugging sessions autonomously

77.9% on SWE-bench Verified (real GitHub issues)

30% fewer thinking tokens than GPT-5.1-Codex at same performance

OpenAI engineers ship 70% more pull requests since adopting it

Why to be cautious: The model is powerful enough to require special safeguards. It runs in a secure sandbox by default (limited file writes, network access disabled), with dedicated monitoring to detect malicious activity.

The lesson: When AI can work autonomously for 24 hours, the bottleneck shifts from “can AI do this” to “should AI do this unsupervised.” Security and monitoring become the critical infrastructure.

🧪 Kosmos: Six Months of Research in One Day

A spinout from FutureHouse just made academic research look like it’s running in slow motion.

What happened: Kosmos is an “AI Scientist” that reads 1,500 papers and runs 42,000 lines of analysis code in a single run. Beta users estimate it completes in one day what would take them six months.

What it does:

Achieved 79.4% accuracy in conclusions

Made seven validated discoveries across neuroscience, material science, and statistical genetics

Three discoveries reproduced findings from unpublished manuscripts (taking 4 months in the original)

Four discoveries made novel contributions to scientific literature

Pricing: $200 per run, free tier for academics

Why to be cautious: Kosmos “often goes down rabbit holes or chases statistically significant yet scientifically irrelevant findings,” according to the announcement. Users typically run it multiple times on the same objective to sample various research avenues. This isn’t autonomous science yet—it’s a research accelerant that still needs expert direction.

🛠️ Building Startups Without Engineers: Three YC Stories

Three Y Combinator startups are proving something remarkable: you can win government contracts without knowing how to code.

What happened: Vulcan Technologies secured state and federal government contracts in four months with three founders—only one properly technical—using Claude Code to build their entire platform.

The impact: Vulcan’s work reduced the average price of a new Virginia home by $24,000, saving over $1 billion annually. Virginia’s governor signed Executive Order 51 mandating all state agencies use “agentic AI regulatory review”. The company raised an $11 million seed round.

Key insight: “If you understand language and you understand critical thinking, you can use Claude Code well. I actually think there might be some marginal benefit for people who studied humanities”, says Vulcan CEO Tanner Jones.

💬 ChatGPT Group Chats: Social AI Arrives

OpenAI added something deceptively simple: group chats where 1-20 people can collaborate with each other and ChatGPT simultaneously.

What it does:

ChatGPT follows conversation flow and decides when to respond (not every message)

Can react with emojis and reference profile photos

Powered by GPT-5.1 Auto (chooses best model based on prompt)

Why you should care: This shifts AI from tool to participant. Planning trips with friends, group project work, family event coordination—ChatGPT becomes a contributor, not just a resource you query.

🏢 Microsoft, NVIDIA, and Anthropic: $15 Billion Power Play

Three tech giants just reshaped the AI infrastructure landscape with investments that make Claude the first frontier model available on all three major clouds.

What happened: Microsoft committed up to $5 billion, NVIDIA up to $10 billion, and Anthropic committed to purchase $30 billion of Azure compute capacity—plus up to one gigawatt of additional capacity.

Why you should care: Claude (Sonnet 4.5, Opus 4.1, Haiku 4.5) is now available on AWS, Azure, and Google Cloud. Amazon remains Anthropic’s primary training partner, but the multi-cloud strategy expands enterprise access significantly. When organisations often standardise on one of those clouds, being available in them all makes you an option regardless of your customer’s cloud choice—this is smart.

💸 Google CEO Warns of AI Investment “Irrationality”

Even the winners think the boom might be a bubble.

What happened: Alphabet CEO Sundar Pichai told the BBC the current trillion-dollar AI investment boom contains “elements of irrationality”, though he believes AI will ultimately prove profound. No company, including Google, would be immune if the AI bubble bursts.

The numbers:

Alphabet market cap: $3.5 trillion (doubled in 7 months)

NVIDIA valuation: $5 trillion

$1.4 trillion in deals around OpenAI with revenues less than one thousandth of planned investment

Why to be cautious: Google admits slippage on climate targets due to AI’s intensive energy needs. Pichai warns of “immense” energy demands and “societal disruptions” to jobs, with those who adapt doing better. The dotcom comparisons are explicit, though Pichai believes AI will be as profound as the internet.

The lesson: When even the CEO benefiting most from AI investment warns of irrationality, listen. This doesn’t mean AI isn’t transformative—it means valuations and expectations may be running ahead of immediate economic reality.

🚀 Your Weekend Project

Pick one:

Test Gemini 3: Sign up for Google AI Studio (free tier) and give it a complex research question in your field.

Try Antigravity’s agentic coding: Download Google Antigravity (free with generous rate limits) and assign it a small project: “Build me a simple web app that [does specific thing you’ve been putting off].”

Try Nano Banana image generation: challenge it to generate something complex with text in it - an infographic, for example.

Explore OLMo’s transparency tools: Visit OLmoTrace and test tracing an AI output back to its training data.

🏗️ About Barnacle Labs

At Barnacle Labs we build AI systems that actually ship. From the National Cancer Institute’s NanCI app to AI systems deployed across biotech and enterprise clients, we’re the “breakthroughs, not buzzwords” team.

Got an AI challenge that’s stuck? Reply to this email—let’s talk.

The voices worth listening to in AI are the ones building, not talking. See you next week.